{

"cells": [

{

"cell_type": "markdown",

"id": "f1bfa603",

"metadata": {},

"source": [

"*Copyright (C) 2022 Intel Corporation* \n",

"*SPDX-License-Identifier: BSD-3-Clause* \n",

"*See: https://spdx.org/licenses/*\n",

"\n",

"---"

]

},

{

"cell_type": "markdown",

"id": "3ebce42a",

"metadata": {},

"source": [

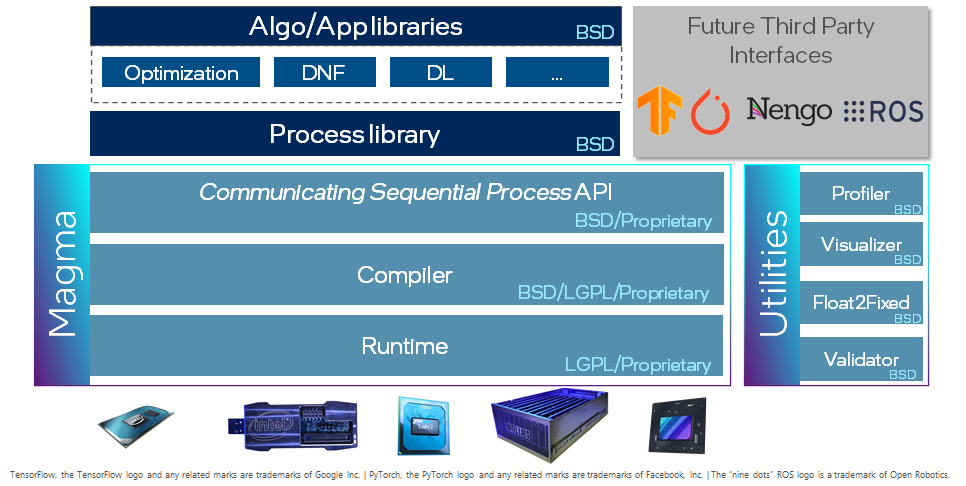

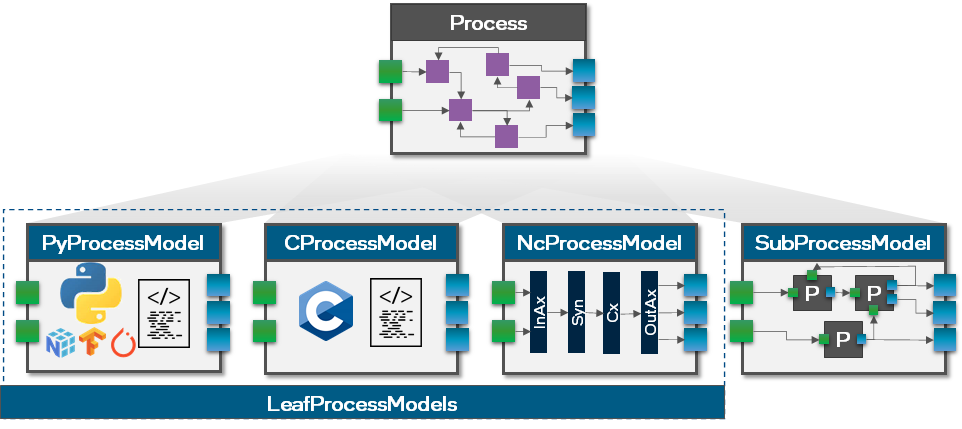

"# Walk through Lava\n",

"\n",

"Lava is an open-source software library dedicated to the development of algorithms for neuromorphic computation. To that end, Lava provides an easy-to-use Python interface for creating the bits and pieces required for such a neuromorphic algorithm. For easy development, Lava allows to run and test all neuromorphic algorithms on standard von-Neumann hardware like CPU, before they can be deployed on neuromorphic processors such as the Intel Loihi 1/2 processor to leverage their speed and power advantages. Furthermore, Lava is designed to be extensible to custom implementations of neuromorphic behavior and to support new hardware backends.\n",

"\n",

"Lava can fundamentally be used at two different levels: Either by using existing resources which can be used to create complex algorithms while requiring almost no deep neuromorphic knowledge. Or, for custom behavior, Lava can be easily extended with new behavior defined in Python and C.\n",

"\n",

"\n",

"\n",

"This tutorial gives an high-level overview over the key components of Lava. For illustration, we will use a simple working example: a feed-forward multi-layer LIF network executed locally on CPU.\n",

"In the first section of the tutorial, we use the internal resources of Lava to construct such a network and in the second section, we demonstrate how to extend Lava with a custom process using the example of an input generator.\n",

"\n",

"In addition to the core Lava library described in the present tutorial, the following tutorials guide you to use high level functionalities:\n",

"- [lava-dl](https://github.com/lava-nc/lava-dl) for deep learning applications\n",

"- [lava-optimization](https://github.com/lava-nc/lava-optimization) for constraint optimization\n",

"- [lava-dnf](https://github.com/lava-nc/lava-dnf) for Dynamic Neural Fields"

]

},

{

"cell_type": "markdown",

"id": "47e4bb81",

"metadata": {},

"source": [

"## 1. Usage of the Process Library"

]

},

{

"cell_type": "markdown",

"id": "910bc90a",

"metadata": {},

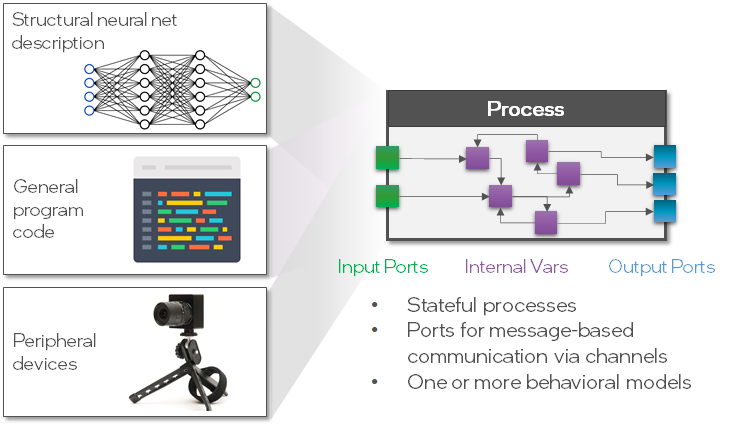

"source": [

"In this section, we will use a simple 2-layered feed-forward network of LIF neurons executed on CPU as canonical example. \n",

"\n",

"The fundamental building block in the Lava architecture is the `Process`. A `Process` describes a functional group, such as a population of `LIF` neurons, which runs asynchronously and parallel and communicates via `Channels`. A `Process` can take different forms and does not necessarily be a population of neurons, for example it could be a complete network, program code or the interface to a sensor (see figure below).\n",

"\n",

"\n",

"\n",

"For convenience, Lava provides a growing Process Library in which many commonly used `Processes` are publicly available.\n",

"In the first section of this tutorial, we will use the `Processes` of the Process Library to create and execute a multi-layer LIF network. Take a look at the [documentation](https://lava-nc.org) to find out what other `Processes` are implemented in the Process Library.\n",

"\n",

"Let's start by importing the classes `LIF` and `Dense` and take a brief look at the docstring."

]

},

{

"cell_type": "code",

"execution_count": 1,

"id": "f5f304d1",

"metadata": {},

"outputs": [

{

"data": {

"text/plain": [

"\u001B[0;31mInit signature:\u001B[0m \u001B[0mLIF\u001B[0m\u001B[0;34m(\u001B[0m\u001B[0;34m*\u001B[0m\u001B[0margs\u001B[0m\u001B[0;34m,\u001B[0m \u001B[0;34m**\u001B[0m\u001B[0mkwargs\u001B[0m\u001B[0;34m)\u001B[0m\u001B[0;34m\u001B[0m\u001B[0;34m\u001B[0m\u001B[0m\n",

"\u001B[0;31mDocstring:\u001B[0m \n",

"Leaky-Integrate-and-Fire (LIF) neural Process.\n",

"\n",

"LIF dynamics abstracts to:\n",

"u[t] = u[t-1] * (1-du) + a_in # neuron current\n",

"v[t] = v[t-1] * (1-dv) + u[t] + bias # neuron voltage\n",

"s_out = v[t] > vth # spike if threshold is exceeded\n",

"v[t] = 0 # reset at spike\n",

"\n",

"Parameters\n",

"----------\n",

"shape : tuple(int)\n",

" Number and topology of LIF neurons.\n",

"u : float, list, numpy.ndarray, optional\n",

" Initial value of the neurons' current.\n",

"v : float, list, numpy.ndarray, optional\n",

" Initial value of the neurons' voltage (membrane potential).\n",

"du : float, optional\n",

" Inverse of decay time-constant for current decay. Currently, only a\n",

" single decay can be set for the entire population of neurons.\n",

"dv : float, optional\n",

" Inverse of decay time-constant for voltage decay. Currently, only a\n",

" single decay can be set for the entire population of neurons.\n",

"bias_mant : float, list, numpy.ndarray, optional\n",

" Mantissa part of neuron bias.\n",

"bias_exp : float, list, numpy.ndarray, optional\n",

" Exponent part of neuron bias, if needed. Mostly for fixed point\n",

" implementations. Ignored for floating point implementations.\n",

"vth : float, optional\n",

" Neuron threshold voltage, exceeding which, the neuron will spike.\n",

" Currently, only a single threshold can be set for the entire\n",

" population of neurons.\n",

"\n",

"Example\n",

"-------\n",

">>> lif = LIF(shape=(200, 15), du=10, dv=5)\n",

"This will create 200x15 LIF neurons that all have the same current decay\n",

"of 10 and voltage decay of 5.\n",

"\u001B[0;31mInit docstring:\u001B[0m Initializes a new Process.\n",

"\u001B[0;31mFile:\u001B[0m ~/lava-nc/lava/src/lava/proc/lif/process.py\n",

"\u001B[0;31mType:\u001B[0m ProcessPostInitCaller\n",

"\u001B[0;31mSubclasses:\u001B[0m LIFReset\n"

]

},

"metadata": {},

"output_type": "display_data"

}

],

"source": [

"from lava.proc.lif.process import LIF\n",

"from lava.proc.dense.process import Dense\n",

"\n",

"LIF?"

]

},

{

"cell_type": "markdown",

"id": "b4dce60e",

"metadata": {},

"source": [

"The docstring gives insights about the parameters and internal dynamics of the `LIF` neuron. `Dense` is used to connect to a neuron population in an all-to-all fashion, often implemented as a matrix-vector product.\n",

"\n",

"In the next box, we will create the `Processes` we need to implement a multi-layer LIF (LIF-Dense-LIF) network."

]

},

{

"cell_type": "code",

"execution_count": 2,

"id": "dbd808cb",

"metadata": {},

"outputs": [],

"source": [

"import numpy as np\n",

"\n",

"# Create processes\n",

"lif1 = LIF(shape=(3, ), # Number and topological layout of units in the process\n",

" vth=10., # Membrane threshold\n",

" dv=0.1, # Inverse membrane time-constant\n",

" du=0.1, # Inverse synaptic time-constant\n",

" bias_mant=(1.1, 1.2, 1.3), # Bias added to the membrane voltage in every timestep\n",

" name=\"lif1\")\n",

"\n",

"dense = Dense(weights=np.random.rand(2, 3), # Initial value of the weights, chosen randomly\n",

" name='dense')\n",

"\n",

"lif2 = LIF(shape=(2, ), # Number and topological layout of units in the process\n",

" vth=10., # Membrane threshold\n",

" dv=0.1, # Inverse membrane time-constant\n",

" du=0.1, # Inverse synaptic time-constant\n",

" bias_mant=0., # Bias added to the membrane voltage in every timestep\n",

" name='lif2')"

]

},

{

"cell_type": "markdown",

"id": "1fbfed43",

"metadata": {},

"source": [

"As you can see, we can either specify parameters with scalars, then all units share the same initial value for this parameter, or with a tuple (or list, or numpy array) to set the parameter individually per unit.\n",

"\n",

"\n",

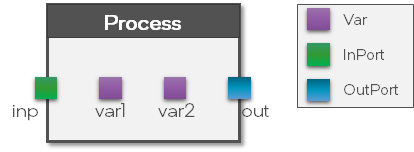

"### Processes\n",

"\n",

"Let's investigate the objects we just created. As mentioned before, both, `LIF` and `Dense` are examples of `Processes`, the main building block in Lava.\n",

"\n",

"A `Process` holds three key components (see figure below):\n",

"\n",

"- Input ports\n",

"- Variables\n",

"- Output ports\n",

"\n",

"\n",

"\n",

"The `Vars` are used to store internal states of the `Process` while the `Ports` are used to define the connectivity between the `Processes`. Note that a `Process` only defines the `Vars` and `Ports` but not the behavior. This is done separately in a `ProcessModel`. To separate the interface from the behavioral implementation has the advantage that we can define the behavior of a `Process` for multiple hardware backends using multiple `ProcessModels` without changing the interface. We will get into more detail about `ProcessModels` in the second part of this tutorial.\n",

"\n",

"### Ports and connections\n",

"\n",

"Let's take a look at the `Ports` of the `LIF` and `Dense` processes we just created. The output `Port` of the `LIF` neuron is called `s_out`, which stands for 'spiking' output. The input `Port` is called `a_in` which stands for 'activation' input."

]

},

{

"cell_type": "code",

"execution_count": 3,

"id": "3f8f656a",

"metadata": {},

"outputs": [

{

"data": {

"text/plain": [

"['s_out']"

]

},

"execution_count": 3,

"metadata": {},

"output_type": "execute_result"

}

],

"source": [

"lif1.out_ports.member_names"

]

},

{

"cell_type": "markdown",

"id": "f8ed37d8",

"metadata": {},

"source": [

"For example, we can see the size of the `Port` which is in particular important because `Ports` can only connect if their shape matches."

]

},

{

"cell_type": "code",

"execution_count": 4,

"id": "f77b750d",

"metadata": {},

"outputs": [],

"source": [

"assert(lif1.s_out.size == dense.s_in.size)"

]

},

{

"cell_type": "markdown",

"id": "7378d13d",

"metadata": {},

"source": [

"Similarly we can investigate the input port of the second `LIF` population."

]

},

{

"cell_type": "code",

"execution_count": 5,

"id": "706dc863",

"metadata": {},

"outputs": [

{

"data": {

"text/plain": [

"['a_in']"

]

},

"execution_count": 5,

"metadata": {},

"output_type": "execute_result"

}

],

"source": [

"lif2.in_ports.member_names"

]

},

{

"cell_type": "code",

"execution_count": 6,

"id": "521bf370",

"metadata": {},

"outputs": [],

"source": [

"assert(dense.a_out.size == lif2.a_in.size)"

]

},

{

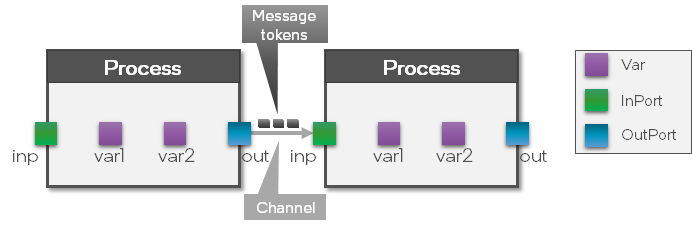

"cell_type": "markdown",

"id": "1c5da64b",

"metadata": {},

"source": [

"Now that we know about the input and output `Ports` of the `LIF` and `Dense` `Processes`, we can `connect` the network to complete the LIF-Dense-LIF structure.\n",

"\n",

"\n",

"\n",

"As can be seen in the figure above, by `connecting` two processes, a `Channel` between them is created which means that messages between those two `Processes` can be exchanged."

]

},

{

"cell_type": "code",

"execution_count": 7,

"id": "657063e9",

"metadata": {},

"outputs": [],

"source": [

"# Connect the OutPort of lif1 to the InPort of dense\n",

"lif1.s_out.connect(dense.s_in)\n",

"\n",

"# Connect the OutPort of dense to the InPort of lif2\n",

"dense.a_out.connect(lif2.a_in)"

]

},

{

"cell_type": "markdown",

"id": "7f0add01",

"metadata": {},

"source": [

"### Variables\n",

"\n",

"Similar to the `Ports`, we can investigate the `Vars` of a `Process`."

]

},

{

"cell_type": "code",

"execution_count": 8,

"id": "d6be4fa0",

"metadata": {},

"outputs": [

{

"data": {

"text/plain": [

"['bias_exp', 'bias_mant', 'du', 'dv', 'u', 'v', 'vth']"

]

},

"execution_count": 8,

"metadata": {},

"output_type": "execute_result"

}

],

"source": [

"lif1.vars.member_names"

]

},

{

"cell_type": "markdown",

"id": "971d5ed7",

"metadata": {},

"source": [

"`Vars` are also accessible as member variables. We can print details of a specific `Var` to see the shape, initial value and current value. The `shareable` attribute controls whether a `Var` can be manipulated via remote memory access. Learn more about about this topic in the [remote memory access tutorial](https://github.com/lava-nc/lava/blob/main/tutorials/in_depth/tutorial07_remote_memory_access.ipynb)."

]

},

{

"cell_type": "code",

"execution_count": 9,

"id": "46c18b1f",

"metadata": {},

"outputs": [

{

"data": {

"text/plain": [

"Variable: v\n",

" shape: (3,)\n",

" init: 0\n",

" shareable: True\n",

" value: 0"

]

},

"execution_count": 9,

"metadata": {},

"output_type": "execute_result"

}

],

"source": [

"lif1.v"

]

},

{

"cell_type": "markdown",

"id": "7574279a",

"metadata": {},

"source": [

"We can take a look at the random weights of `Dense` by calling the `get` function."

]

},

{

"cell_type": "code",

"execution_count": 10,

"id": "e60c16db",

"metadata": {},

"outputs": [

{

"data": {

"text/plain": [

"array([[0.48667088, 0.24619592, 0.89903799],\n",

" [0.96371252, 0.58821522, 0.37490556]])"

]

},

"execution_count": 10,

"metadata": {},

"output_type": "execute_result"

}

],

"source": [

"dense.weights.get()"

]

},

{

"cell_type": "markdown",

"id": "6afa9b38",

"metadata": {},

"source": [

"

\n",

"Note: There is also a `set` function available to change the value of a `Var` after the network was executed.\n",

"

"

]

},

{

"cell_type": "markdown",

"id": "49a7f22e",

"metadata": {},

"source": [

"### Record internal Vars over time\n",

"\n",

"In order to record the evolution of the internal `Vars` over time, we need a `Monitor`.\n",

"For this example, we want to record the membrane potential of both `LIF` Processes, hence we need two `Monitors`."

]

},

{

"cell_type": "code",

"execution_count": 11,

"id": "635bf66b",

"metadata": {},

"outputs": [],

"source": [

"from lava.proc.monitor.process import Monitor\n",

"\n",

"monitor_lif1 = Monitor()\n",

"monitor_lif2 = Monitor()"

]

},

{

"cell_type": "markdown",

"id": "05dc0a83",

"metadata": {},

"source": [

"We can define the `Var` that a `Monitor` should record, as well as the recording duration, using the `probe` function."

]

},

{

"cell_type": "code",

"execution_count": 12,

"id": "ef93825c",

"metadata": {},

"outputs": [],

"source": [

"num_steps = 100\n",

"\n",

"monitor_lif1.probe(lif1.v, num_steps)\n",

"monitor_lif2.probe(lif2.v, num_steps)"

]

},

{

"cell_type": "markdown",

"id": "84a02b97",

"metadata": {},

"source": [

"

\n",

"Note: Currently, the `Monitor` can only record a single `Var` per `Process` and supports only CPU. This functionality will be extended in future releases.\n",

"

"

]

},

{

"cell_type": "markdown",

"id": "ce0c6495",

"metadata": {},

"source": [

"### Execution\n",

"\n",

"Now, that we finished to set up the network and recording `Processes`, we can execute the network by simply calling the `run` function of one of the `Processes`.\n",

"\n",

"The `run` function requires two parameters, a `RunCondition` and a `RunConfig`. The `RunCondition` defines *how* the network runs (i.e. for how long) while the `RunConfig` defines on which hardware backend the `Processes` should be mapped and executed."

]

},

{

"cell_type": "markdown",

"id": "9a43d818",

"metadata": {},

"source": [

"#### Run Conditions\n",

"\n",

"Let's investigate the different possibilities for `RunConditions`. One option is `RunContinuous` which executes the network continuously and non-blocking until `pause` or `stop` is called."

]

},

{

"cell_type": "code",

"execution_count": 13,

"id": "0cf86c34",

"metadata": {},

"outputs": [],

"source": [

"from lava.magma.core.run_conditions import RunContinuous\n",

"run_condition = RunContinuous()"

]

},

{

"cell_type": "markdown",

"id": "865e2ca9",

"metadata": {},

"source": [

"The second option is `RunSteps`, which allows you to define an exact amount of time steps the network should run."

]

},

{

"cell_type": "code",

"execution_count": 14,

"id": "91fbce5e",

"metadata": {},

"outputs": [],

"source": [

"from lava.magma.core.run_conditions import RunSteps, RunContinuous\n",

"\n",

"run_condition = RunSteps(num_steps=num_steps)"

]

},

{

"cell_type": "markdown",

"id": "2366d304",

"metadata": {},

"source": [

"For this example. we will use `RunSteps` and let the network run exactly `num_steps` time steps.\n",

"\n",

"#### RunConfigs\n",

"\n",

"Next, we need to provide a `RunConfig`. As mentioned above, The `RunConfig` defines on which hardware backend the network is executed.\n",

"\n",

"For example, we could run the network on the Loihi1 processor using the `Loihi1HwCfg`, on Loihi2 using the `Loihi2HwCfg`, or on CPU using the `Loihi1SimCfg`. The compiler and runtime then automatically select the correct `ProcessModels` such that the `RunConfig` can be fulfilled.\n",

"\n",

"For this section of the tutorial, we will run our network on CPU, later we will show how to run the same network on the Loihi2 processor."

]

},

{

"cell_type": "code",

"execution_count": 15,

"id": "14c301f7",

"metadata": {},

"outputs": [],

"source": [

"from lava.magma.core.run_configs import Loihi1SimCfg\n",

"\n",

"run_cfg = Loihi1SimCfg(select_tag=\"floating_pt\")"

]

},

{

"cell_type": "markdown",

"id": "baf95f1f",

"metadata": {},

"source": [

"#### Execute\n",

"\n",

"Finally, we can simply call the `run` function of the second `LIF` process and provide the `RunConfig` and `RunCondition`."

]

},

{

"cell_type": "code",

"execution_count": 16,

"id": "331f71b7",

"metadata": {},

"outputs": [],

"source": [

"lif2.run(condition=run_condition, run_cfg=run_cfg)"

]

},

{

"cell_type": "markdown",

"id": "1d8ea488",

"metadata": {},

"source": [

"### Retrieve recorded data\n",

"\n",

"After the simulation has stopped, we can call `get_data` on the two monitors to retrieve the recorded membrane potentials."

]

},

{

"cell_type": "code",

"execution_count": 17,

"id": "582215cd",

"metadata": {},

"outputs": [],

"source": [

"data_lif1 = monitor_lif1.get_data()\n",

"data_lif2 = monitor_lif2.get_data()"

]

},

{

"cell_type": "markdown",

"id": "22f44fba",

"metadata": {},

"source": [

"Alternatively, we can also use the provided `plot` functionality of the `Monitor`, to plot the recorded data. As we can see, the bias of the first `LIF` population drives the membrane potential to the threshold which generates output spikes. Those output spikes are passed through the `Dense` layer as input to the second `LIF` population."

]

},

{

"cell_type": "code",

"execution_count": 18,

"id": "32f48b10",

"metadata": {

"scrolled": true

},

"outputs": [

{

"data": {